The Path from PID to Autonomy

Looking back through the history of automation, it’s not difficult to see how most advances are extensions of existing technologies. This can be seen at all levels of automation—from the evolution of relays into programmable logic controllers to the enterprise, where the all-encompassing ERP (enterprise resources planning) systems were developed from expansions of material requirements planning (MRP) software over the course of several years.

When you look at autonomous systems, however, the advance can seem so vast that it’s not always as easy to see the origin point in traditional manufacturing systems. This gap can make end users wary of autonomous technologies; but when you trace the development path of autonomous systems, it can make these new technologies less intimidating.

Connecting the dots

At Rockwell Automation Fair 2021, Jordan Reynolds, global director of data science at Kalypso (a Rockwell Automation company), gave a presentation on autonomous systems that helped explain how these advanced neural systems, as they’re applied in industry, can be seen as extensions of the PID (proportional-integral-derivative) closed loop control systems with which we’re all familiar.

This model, which sets the stage for autonomy, is created via hybrid modeling. Reynolds said hybrid modeling is developed through two processes: first, there is input from an engineer followed by input from a data scientist who understands AI (artificial intelligence).

“There’s a delta between what an engineer knows and what a data scientist does to derive a model that can learn rather than being programmed,” said Reynolds. He stressed that, to develop an effective model for autonomous operations, you need the engineer to define basic principles and have the data scientist close out the gaps to ensure the model performs to standards. “This is hybrid modeling,” he said.

Autonomic control

Rockwell Automation is focusing on this area because it views autonomy problems as control problems.

“Feedforward control was one of first predictive applications of control,” said Reynolds. “It expands on feedback control and provides early indications of a state that is yet to come so that it can be addressed proactively. Model predictive control (MPC) is a modern version of feedback control, where you use multivariable models to characterize how a system performs. We use those models to control a system better than we could with PID.”

Reynolds noted that Rockwell Automation acquired Pavilion Technologies in 2007 for its MPC technology.

“MPC is good with highly predictive systems that don’t change that much,” said Reynolds. “But it falls short when recipes or parameters change. The MPC must be updated because it does not adapt on its own. That’s where adaptive control comes in. In adaptive control, the model doesn’t have to be perfect because it can adjust to changes.”

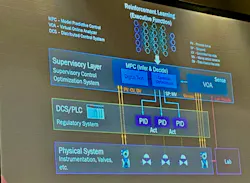

Explaining the connections between PID and autonomous operations with Reinforcement Learning, Reynolds said PID is used to ensure the system complies to the setpoints, whereas MPC determines the setpoints based on the multiple inputs and outputs of the system. Going further, Reinforcement Learning creates an executive function that can strategize.

Reynolds provided a car racing example to help illustrate this concept. MPC keeps the car in the lane, he said, while Reinforcement Learning helps you strategize about how to win the race, e.g., when to change tires, when to take the inside lane, etc.

Deep learning neural networks, like those used in Reinforcement Learning, is where most innovation has come from as industry advances toward greater use of autonomous systems. The problem is that, even though neural networks are highly accurate, they’re not very transparent or explainable. “An engineer can’t easily get an answer as to why decisions are made by the neural network,” Reynolds said.

That’s why Rockwell Automation focuses on deep symbolic regressions to explain how an AI control model works. Reynolds said Rockwell Automation uses this in its FactoryTalk Analytics LogixAI. According to Rockwell Automation, FactoryTalk Analytics LogixAI applies analytics within the controller application to achieve process improvement. It is an add-on module for ControlLogix controllers that streams controller data over the backplane to build predictive models.