Validating Automation Machine Learning Models – The Oracle Problem

One of my more aspirational career goals has been to increase the efficiency of validation testing without lowering the quality. Testing of automated systems is essential to ensure quality in both the control system and the product. Traditionally, commissioning consisted of comparing the system response against a pre-approved set of outputs. This method has served the industry well where all code execution paths were deterministic and knowable.

As adoption of machine learning (ML) and artificial intelligence (AI) on the plant floor becomes more common, it is becoming clear that the newly deployed machine models are subverting traditional testing techniques. It is not always possible, nor practical, to identify every possible execution path of a modern machine model developed through supervised learning. Protocols developed for traditional state-machine logic or sequences will require modernization to continue providing the same level of confidence.

The current generation of commissioning protocols are based on automated software testing techniques established in the early 1980’s and 90’s using what are known as test ‘Oracles’. An Oracle in this sense refers to the absolute authority (i.e. source of truth) used to determine if a certain test passes or fails. The correct output for an expected input is determined by the Oracle. This role can be design documentation, user requirements, or experienced subject matter experts.

With the increasing use of ML applications on the plant floor, automation control software will begin falling into the non-testable class of software. The input domains are large, boundaries difficult to define, and environment preconditions determine correct interpretation of the results. The current reliance on explicit testing will need to evolve and leverage implicit testing methods to maintain relevancy.

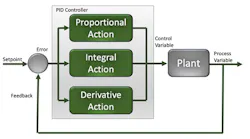

Validation testing today falls on a spectrum of explicit and implicit testing. An excellent example of implicit testing in legacy systems is verifying a PID control loop. While the mathematical model of a PID is known, for all practical purposes they are typically tested as a black box that takes an input, applies a mathematical model over time, and sets an output. It is very common to field-test PIDs during commissioning. However, the testing relies heavily on the tester to maintain clever control of the inputs to get the right output for a pass/fail result. Once in production or paired with cascaded loops, controlling a complex set of real-world inputs borders on the impossible.

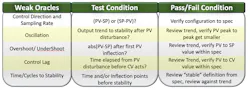

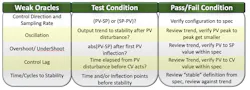

Even then, most engineers can quickly determine if a process isn’t controlling correctly. Abnormal conditions such as inverted control response, oscillation, or just bad tuning parameters are recognizable to the trained eye. For a consistent and repeatable test that doesn’t rely on the skill of the observer, the testing should instead rely on a series of overlapping weak Oracles to implicitly verify correct functionality.

The PID example above illustrates the problem of validating machine learning algorithms where the number of inputs may be variable, the mathematical model is unknown, and the output includes a confidence factor. These new algorithms require a different approach to implicit testing. Developing a robust validation strategy should begin now.

A human Oracle will still be needed for the foreseeable future. But we can begin integrating the same advanced tools that have been used in other industries to address similar issues verifying the efficacy on machine learning models:

Metamorphic testing was developed to help mitigate the Oracle problem in application software testing. Grossly simplified, the methodology uses the relationship between a system’s output and multiple inputs to detect possible malfunctions. A possible application would be controlling pH when the volume of material to be controlled is known. If the system starts dumping excessive neutralization solution, it’s probably safe to assume something is wrong and we’re just making salt. Metamorphic testing, in this case, helps constrain testable values that cannot be precisely pre-defined in stochastic systems.

Cross Validation with an independent model is another possible tool. At its core, cross validation evaluates the output from two independent mathematical models of a system to determine if they are in agreement. The major drawback to this is that it doubles the work and processing required. But as our industry moves toward process modeling before equipment is built, this initial model may be used to validate the real-world process.

While not a solution to the Oracle problem, preparing now can help integrate and mold existing tools from other industries to progress implicit testing for validation. Machine learning models are being deployed now. The pursuit of advanced testing methodologies is a worthy aspirational goal for the automation industry.

Bill Mueller, is the founder and senior engineer of Lucid Automation and Security, an integrator member of the Control System Integrators Association (CSIA). For more information about Lucid Automation and Security, visit its profile on the Industrial Automation Exchange.

About the Author

Bill Mueller

Founder and Senior Engineer at Lucid Automation and Security

Leaders relevant to this article: