Understanding Virtualization for Industrial Automation

At the virtual 2020 Ignition Community Conference, held by Inductive Automation, Mike Fahrion, chief technology officer at Advantech B+B SmartWorx, conducted a session that explained the use of virtualization for industrial automation applications. Though there has been plenty of coverage on the concept of virtualization in manufacturing applications (including articles published by Automation World), there remains a good bit of confusion around the topic. To help clear up this confusion, Fahrion’s presentation provided plenty of straightforward explanations. In this article, we’ll highlight the main points.

Dedicated vs. virtual

To help set the scene for his explanation of virtualization, Fahrion offered several examples, such as buying a camcorder back in the 1990s or early 2000s when you wanted to record something on video, or buying an alarm clock to ensure you woke up on time, or buying a GPS device if you were going on a remote hike. These are all examples of dedicated appliances we once all bought to do specific things.

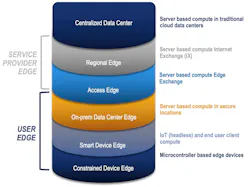

That’s where virtualization comes in. With this technology we have access to “software-defined appliances running in common hardware platforms [so that] when we want to add a function, we simply add another software application to extend the value and the flexibility of the hardware we already own,” he said. “This is ideal for today's environment of digital transformation because we not only reduce the quantity of the hardware we need to buy, but we also increase our agility and the adaptability such that we can more easily respond to changing business requirements. And [virtualization] allows us to do this without big rip-and-replace cycles and it reduces vendor lock in.”

From data centers to industry

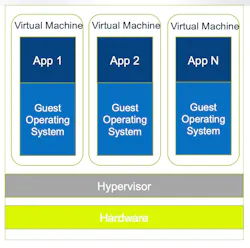

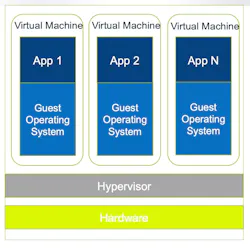

Fahrion noted that virtualization isn't something new. “We've been doing it on servers and in data centers for years,” he said. In the world of data centers and servers, the way the virtual machine works is through the addition of a layer of software called a hypervisor that sits on top of a piece of computing hardware. A hypervisor allows one piece of hardware to run multiple virtual machines. Fahrion added that Advantech B+B SmartWorx’s “virtual machines sit on top of that hypervisor so that each virtual machine bundles together an operating system, the application itself, and any of its dependencies, libraries, or configurations that are needed to run that application. And these virtual machines are easily replicated across different hardware platforms are easy to scale up or down.”

Containers are a “leaner form of virtualization,” explained Fahrion, because they “share the host operating system rather than replicating the operating system in every container. Therefore, we only need one operating system sitting on the system for each container to hold the application and its dependencies, libraries, and configurations.”

He added that, if there are libraries in the operating system that are shared across containers, it is not necessary to replicate them in each container. “Containers are isolated from each other and from the outside world; we create interconnections over virtual networks within the containers or bridge them out to the outside world.”

Because each container essentially gets its own virtual network and has no access to outside sockets or other containers in a native fashion, Fahrion said that, if this set up is managed correctly, the use of containers can dramatically reduce the number of attack vectors on your network.

Additional Advantages

Beyond the security advantages of containers, Fahrion said another major advantage is resiliency. “When you build one monolithic application that holds your user interface and your databases and everything else, if one part of that compiled application crashes, the whole thing is gone and needs to be restarted,” he said. “In a container, however, we isolate each of those functions from each other. This means that one crashed application doesn't bring down the whole machine. We just need to restart that particular container, which can be set up to happen automatically.”

Scale is another important feature of virtualization. Fahrion explained that, because new containers can be deployed virtually in seconds, it’s “very easy to deploy a single solution across thousands of pieces of hardware and thousands of locations,” he said. In this way, containers eliminate the issue of application working one machine but not on another as well as the debug cycles associated with correcting such problems.

Speed and agility are another set of advantages offered by containers. “If you need to update one of your virtualized, containerized applications, you can just stop that container and update it without taking down the higher piece of hardware or the rest of the applications,” said Fahrion. “Or maybe you want your applications to always update to the most recent version or maybe you never want that to happen and you want them to always stay fixed on a very specific version—either way that can all be done with a simple command switch. As often happens in the world of industrial IoT and digital transformation, we discover new use cases as we go, so it's very common to want to add new features, like adding an artificial intelligence inference engine to the edge in a containerized solution. That's very easy to do by simply adding another container—and you don't have to worry about it impacting the rest of your application or what's it’s going to do to your user interface or database because everything is isolated.”