When it comes to implementing the Internet of Things concept as a means of collecting data for analysis, the manufacturing and processing industries are better suited than most others to take part. The reason for this is that the Internet of Things requires an essential ingredient—data. And that’s something the manufacturing and processing industries have always had an abundance of because of their vast base of installed sensors, controllers and actuators.

But therein lies the problem. Though industry has a cornucopia of data-gathering devices, most of them were installed long before the Internet of Things (IoT) concept came into being. In many case, these devices were placed into service before the Internet itself came into common use.

Since no company can or will undergo an entire rip-and-replace process—along with its inherent downtime and astronomical costs— the challenge lies in how to effectively upgrade industry’s automation and control equipment for use in IoT applications. This need has led numerous automation and software companies to provide a variety of technological options to bridge the gap between installed legacy device functionality and IoT. One of the more prominent forces behind this movement to bring legacy control into the IoT era is the Open Process Automation Forum (OPAF).

The OPAF, a working group within The Open Group, is a vendor- and technology-neutral industry consortium focused on developing a standards-based, secure and interoperable process control architecture that can be used across multiple industries. Read more about the OPAF here.

Following ExxonMobil’s initial call last year for the kind of open industrial software architecture now being promoted by OPAF, the entry of some non-traditional players into this arena was not entirely unexpected. We’ve already seen it take place through several IoT-related partnerships and now we’re seeing it in the increasing entry of IT players into the industrial space. The reason: Well-established IT applications are having an increasingly significant impact on industrial operations, especially with the advent of IoT applications.

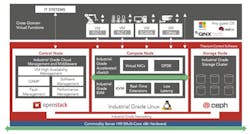

At the Embedded World event in Nuremberg last week, Wind River—a company known primarily for IoT operating systems, board support packages, and device management and simulation products—announced its entry into the open automation platform arena with its Wind River Titanium Control product. This software platform, which is based entirely on open standards (as directed by the OPAF), is an on-premise cloud infrastructure that is reportedly able to virtualize traditional industrial physical devices and deliver the high performance, high availability, flexibility and low latency required by industry via these virtualized devices. In other words, by virtualizing a plant’s existing physical infrastructure, the digital twins of these devices enable legacy equipment to function in the IoT realm, with data shuttling back and forth between the physical and virtual devices in real time.

While virtualization of enterprise IT platforms for server redundancy applications is a well-established practice, Wind River says that its Titanium Control software differs from standard IT virtualization in that it “provides high reliability for industrial applications and services deployed at the network edge”—i.e., the field devices core to the physical automation layer in industry.

Wind River cites the following features as being key to its Titanium Control software:

- Using standard, commercial off-the-shelf software for on-premise cloud and virtualization, including Linux, real-time Kernel-based Virtual Machine (KVM) and OpenStack.

- High performance and high availability with accelerated vSwitch and virtual machine to virtual machine communication, plus virtual infrastructure management.

- Security features including isolation, secure boot and Trusted Platform Module.

- Scalability from two to over 100 compute nodes.

- Hitless software updates and patching with no interruption to services or applications.

Wind River’s Chief Strategy Officer, Gareth Noyes, said at the Embedded World event that the industry drivers that led Wind River to develop this new software platform include the cost to maintain and upgrade existing control systems and their triple redundancy configurations; the shortening obsolescence cycle for all technologies; industry-wide efforts around capital cost reduction, poor component interoperability among most industrial devices; and insufficient security for legacy systems.

“Technology enablers like virtualization and cloud options make [bringing] these kinds of changes [to legacy industrial devices] more viable. Also, [the rapid advance of machine learning across industry] requires a different computer architecture. All of which means there’s a need to quickly modernize industrial control systems.”

Noyes cited ExxonMobil’s call, and industry’s ensuing interest via the OPAF, to flatten the seven-layer OSI architecture as being another important driver of Wind River’s development of the Titanium Control platform.

“We have seen virtualization happening in other mature industries, such as avionics and telecom, for several years now,” Noyes said. “In fact, it started in avionics 20 years ago with Integrated Modular Avionics.” Work in this area led to the development of a certified safe and secure architecture for the operation of multiple, critical avionics applications and systems while incorporating general purpose virtualized environments.

Adding to this point, Noyes noted that the Titanium Control platform is equally applicable to continuous and discrete control systems, even though much of the early interest in the platform has thus far come from process control experts working with continuous systems.

The established ability of virtualization to deliver at critical levels of performance make it suitable, according to Noyes, to address the industrial challenges of reliability and availability, such as fault tolerance with no single point of failure and with minimal loss of service or data on failover; device management to address live patching issues and evolution of services with minimal disruption and ability to unlock data at the edge; performance scalability to support critical industrial apps and services; providing an on-premise cloud platform that can connect from the device to the data center; and a provision of security for applications ranging from air gapped systems to modern IT system architectures using hardware root of trust and authorization.

Noyes said the goal of Titanium Control is to “extend the control loop beyond the physical device on the factory floor. [Titanium Control] allows aggregates of multiple loops and data sets and provides a platform for additional operational process like analytics. It also supplies an interface for exposing data to an asynchronous learning loop for machine learning applications.”

The benefits of an on-premise cloud platform like Titanium Control, said Noyes, is that it can provide for seamless failover because it resides alongside legacy infrastructure. It also reduces operating costs by running on a server blade. And when it comes to testing of components from different vendors before deployment in the plant, the time to perform this task is reduced because Titanium Control gives full visibility and control to operations and management where needed, Noyes said.

Sponsored Recommendations

Leaders relevant to this article: