The Coming Age of Vision-Guided Robots

If you’ve ever seen an industrial robot demonstration, chances are the demonstration you saw was a pick-and-place application. In fact, one of the first—if not the first—industrial robot was developed by Unimation and was referred to as a programmable transfer machine. It basically moved an object from one point to another, i.e., pick and place. While pick-and-place robotics has undergone numerous technology advances since the introduction of Unimation’s programmable transfer machines, one of the biggest advances impacting robotics of all kinds today are new vision technologies.

“The incorporation of vision enables a robot to pick and place a variety of parts as well as change tasks because it can see which part it is dealing with and adapt,” says Carlton Heard, vision product marketing manager at National Instruments. “An added benefit of using vision for pick-and-place guidance is that the same images can be used for in-line inspection of the parts being handled. Rather than waiting to perform visual inspection at the end of the production process, with vision as part of the process it can be done at any point in the factory line.”

Enabling this greater incorporation of vision with robotics are embedded computer processing capabilities. Heard notes that tighter systems integration and the execution of multiple automation tasks on the same hardware platform, via use of FPGAs and CPUs, permit applications like visual servo control and dynamic manipulation.

“Visual servo control can solve some high-performance control challenges by using imaging as a continual feedback device for a robot or motion control system,” Heard says. “In some applications, this type of image feedback technique has even been used to replace other feedback mechanisms such as encoders.”

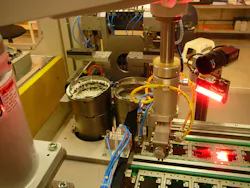

Semiconductor wafer processing is one manufacturing area in particular where visual servo control has already been put to use. Heard says new machines in this industry are incorporating vision with motion control to “accurately detect minor imperfections in freshly cut chips. These machines can now intelligently adjust the cutting process to compensate for imperfections and increase yields in manufacturing.”

Another growing field for vision-enabled robots is mobile robotics. These robots need sophisticated imaging capabilities to perform tasks ranging from obstacle avoidance to visual simultaneous localization and mapping. “In the next decade, the number of vision systems used by autonomous robots should eclipse the number of systems used by fixed-base robot arms,” Heard predicts.

“We are now at a point where processing power and low-cost imaging sensor availability are no longer limiting factors” in the use of vision, Heard says. “A great example of this is the Microsoft Kinect, which has APIs for use in areas outside of gaming. An intelligent robot or machine can now be built by anyone who can mount a Kinect onto another system. The hardware is there, and readily available software for embedded vision will be the catalyst for even further growth in mobile robotics.”

Read more about how low-cost computer vision is impacting robotics in one of my recent blog posts—Computer Vision: An Opportunity or Threat?

About the Author

David Greenfield, editor in chief

Editor in Chief

Leaders relevant to this article: