A Data Strategy to Maximize Performance

Manufacturers today find themselves squeezed on all sides by conflicting demands. Corporate strategies call for increasing throughput while cutting operating costs. Government directives push for reduced energy consumption. Utilities happily send along power bills while cranking up the rates for excess usage. The solution is to do less with more, but how? The answer is factory visibility, based on the principle that data gathered from intelligent, interconnected equipment on the production floor can deliver real-time insights to optimize operational efficiency and support strategic planning.

Mitsubishi applied this approach to its own facilities, developing custom equipment to provide the information it needed to fine tune operations and cut waste. Recognizing that other organizations face the same challenges, it has made the offerings available as part of a conceptual framework known as e-F@ctory. Supported by the rich and vibrant ecosystem of the e-F@ctory Alliance, e-F@ctory makes it easy to extract data from the shop floor and convert it to highly consumable forms for use by everyone from operators to upper management. In this series of newsletters, we will focus on data-centric, e-F@ctory-style approaches to maximizing productivity and profitability, highlighting the technologies necessary to reduce the risk of downtime.

Overall equipment effectiveness (OEE), the mantra of modern manufacturing, is the product of availability, performance and quality. Translated, that means maximizing uptime, and increasing the speed and number of good parts produced. That’s easier than ever using the latest generation of smart, networked components that are designed to deliver the intelligence necessary to support higher OEE. Higher current draw in a drive, for example, might provide advance notice of a worn bearing before it fails. Temperature rise in a gearbox could indicate lubricant breakdown. Machine data provides a treasure trove of clues that can be used by the entire organization to monitor factors like environmental conditions, machine structural health and production throughput. The challenge is extracting the data in the first place. That’s where a high-speed data logger can help.Capturing data

When it comes to data, how you acquire it is just as important as what you acquire. Your sampling rate needs to be fast enough to monitor the events of interest. If you’re trying to find the root cause of failure, you’ll want triggering and event-logging capabilities so that the exact conditions around the fault are known. At the most basic, you need the ability to monitor all of the components of interest, but also to easily get that data analyzed and relayed to the controllers or individuals in a position to act on it.

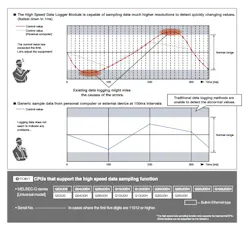

The workhorse of industrial data monitoring is the data logger. Most CPUs, whether in an industrial PC or machine PLC, have some sort of built-in data logger. The problem is that they tend to be slow and offer only limited functionality. The performance might be adequate for simple scenarios, but not for the types of applications that dominate manufacturing. A dedicated data logger provides a better solution.

With the ability to harvest data from dozens of CPUs, a dedicated data logger does a better job of monitoring the huge numbers of components required to truly extract value in a visible factory. Because they are purpose built, these types of data loggers typically offer sampling intervals one or two orders of magnitude smaller than CPU-based data loggers. That level of granularity provides the kind of visibility necessary to track down transient faults or discover the differences in operating parameters that might be responsible for one shift making more product than another (see figure 1). You can diagnose problems you knew existed but just couldn’t see, or uncover developing issues before they impact operations.

The challenge with this type of monitoring is to avoid capturing data for data’s sake. The first step is to define your objectives and determine what you need to monitor them effectively. Are you monitoring machine health or the environment? Is it more important to track throughput or actual operating rate? Before you collect data, have a strategy for how you plan to use it.

Data logger functionality like triggering can streamline the capture process. High-frequency data acquisition presents the clearest picture of activities, but the enormous volume of data involved can also fill available memory with a record of normal operation. User-defined triggering enables you to set thresholds around the parameters of interest. When a fault occurs, the logger should be able to use the data buffers to extract a record of conditions immediately prior to the event, as well as during and immediately after. By recording data only when anomalies occur, you save time that would otherwise be spent analyzing unremarkable numbers and reduce your data storage demands.

Auto logging is another key functionality. Although OEMs and system integrators increasingly leverage web-enabled systems to troubleshoot and even correct problems, remote monitoring simply can’t match the sampling rates delivered by local capture. Auto logging capabilities allow off-site specialists to configure the data logger to acquire data automatically with minimal effort on behalf of the end-user.

The granularity and intelligence of the modern visible factory supports informed decision-making and improves operations at every level. It has to be properly executed, however, starting with data acquisition. High-speed data loggers play an essential role in that process, collecting and delivering data with minimal time and effort from both machine builder and end user. Find out what a dedicated high-speed data logger can do for you.

Further reading

Meet the Tools: High-Speed Data Logger

Mitsubishi’s High Speed Data Logger is a dedicated module that can interface with up to 64 CPUs to gather a range of data types with a minimum of effort. Its 1-ms sampling rate provides far more granularity than a typical built-in CPU data logger. Continuous-mode operation coupled with a customizable triggering function ensures that the module acquires data immediately before, during, and after an anomalous event to improve troubleshooting while minimizing memory usage.

The High-Speed Data Logger’s auto-logging function allows OEMs and integrators to troubleshoot remotely while taking advantage of high-speed local data capture. They can send the end-user a CompactFlash card with a configuration file. The user simply plugs the card into their data logger and runs the machine as usual, then sends the card back to their service engineer for analysis. Auto logging can speed response time and in many cases eliminate the need for a site visit entirely.

The system presents data in easily consumable forms, generating automatic reports and custom templates and even doing some basic on-board analysis. For more information, see the datasheet.