Role of Advanced Software in the Refinery of the Future

A quick look at the numbers show it: Despite significant energy efficiency steps taken in the United States, Canada and Europe over the last several years, oil and gas use is expected to rise significantly in the near future. Currently around 89 million barrels of oil are consumed daily on a global basis. And while a lot of attention is paid to the roughly 22 barrels consumed per capita in the U.S. each year, many aren’t aware that, in the tiny country of Kuwait, consumption levels clock in at around 53 barrels per capita per year.

According to Martin Turk, Ph.D., director of hydrocarbon processing industry solutions for Schneider Electric, the reason for such large consumption per capita in countries like Kuwait, Qatar and the United Arab Emirates is due to two reasons: the supply of oil and gas is plentiful and, therefore, cheap; and these countries have implemented relatively few efficiency measures. Therefore, when looking at global consumption numbers, and considering that China and India currently only consume about 2 barrels per day per capita, there’s a great deal of consumption increase on tap as these countries’ economies continue to develop.

To profitably address the coming consumption increases, as well as the current industry trends around proactive safety and maintenance, the need to get engineering drawings and documents to reflect the as-operated state of plants, more effective handling of the range of properties from unconventional crudes (e.g., from the oil sands and shale areas), and a continued emphasis on minimizing energy usage, Turk says that refineries will increasingly have to focus on “maximizing the value of every molecule processed.”

Moving Forward

To reach the point of maximizing the value of every molecule processed, Turk points to four key computing advances:

-

Computer power advances will allow optimization technology and mathematical solvers to run rigorous online models that can be used for refinery-wide optimization—including planning and scheduling—without requiring individual process unit optimizers;

-

Models will form the core of asset value optimization with model rigor matched to end-user requirements;

-

Industry-leading refiners will use various techniques, including: Compositional analysis and characterization of feedstocks; component-based reactor models; and equipment and process performance management will be highly-automated, context-sensitive and predictive in nature.

To apply such computing advances across the cycle of model-based activities involved in a refinery—planning, executing and measuring—and achieve the level of integrated operations management wherein the entire business control loop is automated, Turk cites several core technology requirements. These requirements include:

-

A common information model to ensure interoperability across multiple vendors’ software applications using industry standards such as B2MML;

-

Information mining tools that use pattern recognition to determine where the process is moving based on past behavior and make recommendations for model changes to correctly manage the new operating point;

-

Master data management for a “single source of truth”;

-

A network architecture that treats mobile devices as “first class citizens”;

-

Elimination of the traditional divide between steady-state and dynamic rigorous models;

-

Defense-in-depth approach to cybersecurity;

-

Site-wide wireless/cellular network with redundancy;

-

Use of a cloud architecture for sharing information, obtaining remote expert advice or benchmarking and comparing plant performance;

-

Operations center collaboration technology that can aggregate maps, videos, applications views, television news feeds, remote collaboration and annotation, as well as decision support desks;

-

Immersive 3D visualization and navigation technology for training; and

-

Human-like robots to replace field operators and maintenance personnel for work in hazardous areas.

Realizing the vision

Though some of Turk’s vision is more long-term rather than near-term, work to align refinery business strategy with execution along these lines is already underway at Schneider Electric. The plan is to create a model-based lifecycle process for refineries addressing design, operation and optimization.

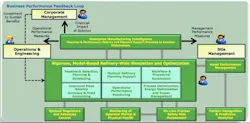

Using such software, model-based refinery-wide simulation and optimization can address feedstock selection planning & scheduling, refinery planning support, work & procedural automation, operations & performance monitoring, process & energy optimization and power management, and mass balance accuracy & yield accounting in alignment with each other, rather than as separate operations. Processing of operations in this way will power a business feedback loop that connects corporate management, operations & engineering, and site management with one system, Turk said.

With this approach, Turk said that the goal of automating the business control loop can be achieved and provide the ability to maximize the value of every molecule processed to all levels of decision-making within a refinery.

As far-reaching as these capabilities may seem, it’s important to realize that Schneider Electric’s release this week of SimSci Spiral Suite software already touches on a number of the points outlined in Turk’s vision for the refinery of the future. Whereas the next generation of Schneider Electric simulation software will focus on internal refinery operations and business optimization, Spiral Suite is focused on optimizing the supply chain interactions connecting the upstream and downstream sides of oil & gas extraction, processing and delivery.