How Smart Cameras Help Solve Some of Manufacturing’s Biggest Issues

The application of artificial intelligence (AI) in automation technologies already spans a wide scope, even though the technology is still very much in its early stages. From guiding autonomous mobile robots and dramatically improving quality inspection to control logic, food safety, and predictive maintenance, it’s clear that AI will be playing a critical role in automation for the foreseeable future.

Safety

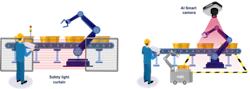

When it comes to worker safety in industrial environments, light curtains are among the most frequently used technologies. These devices protect personnel from injury by creating a sensing screen that guards machine access points and perimeters.

Conventional machine vision systems using IP cameras and AI modules also often have considerable latency issues, rendering them less than ideal in applications requiring an immediate response.

AdLink's Neon-2000 series AI smart camera has been designed to address this latency problem. “It captures images and performs all AI-related operations before sending results and instructions to related equipment, such as a robotic arm,” said Yang. “The real-time machine vision AI of the Neon-2000 series offers additional benefits to augment worker safety by alerting users if they enter an unsafe zone and logging that information for retraining purposes. For example, if a worker approaches a hazardous area, instead of the robotic arm shutting down completely, it could go into a functional safety process loop. Routines such as these not only improve worker safety but also increase the factory's operating efficiency.”

Operator efficiency

In manufacturing, cycle time is a key aspect of production efficiency, as it represents the amount of time spent to produce an item until the product is ready for shipment.

Yang said that using AI smart camera technology to monitor employee behavior and position helps enforce standard operating procedures and improve worker efficiency, thereby reducing cycle time. Often referred to as “pose tracking” or “pose detection,” this term describes notation of a body's position and movement with a set of skeletal landmark points, such as a hand, elbow or shoulder.

He adds that tracking whether an operator is present at their workstation on the production line also automates and verifies timesheets. “Monitoring that they are actively following the standard operating procedures ensures quality control and line balancing,” Yang noted.

Quality control

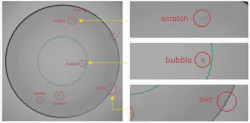

Manual product quality inspection is time-consuming, often inconsistent, and can ultimately create bottlenecks in the production line. Conventional automated optical inspection (AOI) machine vision can detect easy-to-find defects faster than humans, but when a fault is difficult to detect—such as a flaw on a contact lens—these machine vision systems reach their limits in terms and accuracy and consistency, said Yang.

Yang note that AI smart cameras can inspect 50x more lenses than manual visual inspection, with accuracy improvements ranging from 30% to 95%.

Vision analytics

According to Yang, the AI machine vision applications he noted above require AI algorithms for deep learning. “The software experts that develop AI algorithms need a smart, reliable platform for executing AI model inferencing,” he said. “AI smart cameras with pre-installed edge vision analytics (EVA) software address many issues common to conventional AI vision systems, improve compatibility, speed up installation, and minimize maintenance issues.”

He added that EVA also help shorten smart camera deployment time.

“It may take engineers as long as 12 weeks to conduct a proof of concept (PoC) for an AI vision project, because it takes considerable time to overcome the learning curve of choosing optimized cameras and the AI inference engine to be used, retraining AI models, and optimizing video streams,” he said. “However, EVA software simplifies these steps with its pipeline structure and shortens the PoC time by up to 2 weeks.”

About the Author

David Greenfield, editor in chief

Editor in Chief

Leaders relevant to this article: